When people talk about cyberattacks, many still picture an outdated scenario: a phishing email arrives, someone opens the attachment, a malicious file makes its way onto the server, the antivirus either detects it or doesn’t, and then the usual story unfolds. Well, the latest Mandiant M-Trends 2026 report paints a very different picture, and this reality is becoming harsher and more unpleasant for those whose work involves server infrastructure. For hosting providers, this is bad news across the board, because attackers are increasingly targeting the very places where a client’s entire technical infrastructure resides - such as public services, control panels, edge devices, backups, account systems, and the virtualization layer. In other words, the nodes that many previously viewed as merely supporting functions.

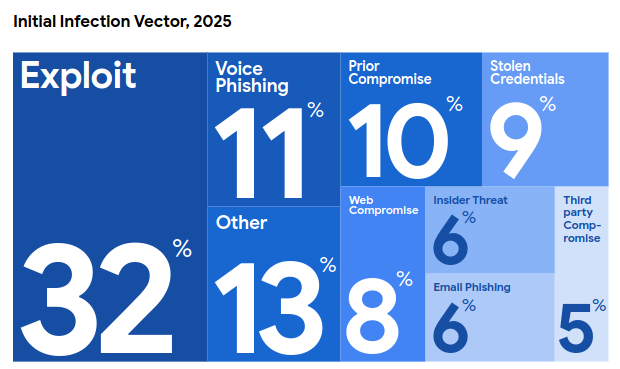

Of course, the hosting industry has always been at risk; there’s nothing new there. What’s new is the nature of the attacks themselves. Mandiant reports that in 2025, exploits once again became the most common initial vector - for the sixth consecutive year. They accounted for 32% of investigations where an entry point was successfully identified. In second place is voice-based social engineering, or vishing, at 11%. Email phishing, meanwhile, dropped to 6%, although it still accounted for 14% in 2024. The landscape has shifted, and now an attacker no longer needs to send mass emails, risking being banned for SPAM. Sometimes it’s enough to call the help desk, impersonate a company employee, and convince someone to reset a password, change MFA, or confirm access to a “legitimate” SaaS application. For owners of VPS or dedicated servers, this means one simple thing: the entry point for an attack increasingly begins not with a malicious file on the disk, but with trust, haste, and human error.

Source: Mandiant M-Trends 2026

It can be said that servers have thus moved closer to the front lines of the battle. Previously, a web server might have been viewed as just one part of the business; now, it is often the first door that attackers try to break into. Public websites, CRMs, admin panels, self-hosted services, control panels, VPN gateways, webmail interfaces, virtualization servers, API endpoints - all of these face outward in one way or another, and rest assured, automated scripts are probing them nonstop. Mandiant specifically notes that in 2025, the most frequently exploited vulnerabilities were in internet-accessible corporate platforms: SAP NetWeaver, Oracle E-Business Suite, and Microsoft SharePoint. The logic here is very clear.

After all, if a single vulnerability allows immediate access to a system containing documents, access to internal processes, service accounts, and entry points for further movement across the network, the attacker will go right there. For hosting providers, this is a bad sign also because, on the client side, there is very often neither a proper window for rapid updates nor a mature vulnerability management process. The server “works,” and “if it works, don’t touch it”. But that’s only until the first breach.

There’s another unpleasant detail. The report explicitly states that sometimes less than 30 seconds pass between initial access and the transfer of that access to another group. This looks very much like a new type of infrastructure threat. A single attacker finds a vulnerable server, gains a foothold, and then access is almost immediately handed over to those who know how to squeeze the most out of the compromise: encrypting data, stealing information, destroying backups, and sabotaging recovery efforts. In the past, many teams were in the habit of treating a “minor” alert as something trivial. Whether it was a suspicious command in the console, a brief anomaly in the logs, a strange login, a PowerShell script that wasn’t entirely clear, or a temporary web shell in a website directory - all of these were often put at the back of the task queue. But now, that’s a luxury. In modern attacks, a signal that seems insignificant at first glance sometimes lives in the system for mere seconds, and then a completely different stage of the attack begins.

For those who use hosting services, an important conclusion follows from this. The services offered can no longer be evaluated solely based on CPU, RAM, and disk space; this methodology is long outdated. A customer can rent a powerful server with a good plan, fast NVMe storage, and plenty of cores, but if the control panel hasn’t been updated, if tokens are stored in plain text in a repository, and if there’s no proper access control on the hypervisor, then all these “resources” simply become a convenient platform for someone else’s work or games. And this no longer applies only to enterprises, as small businesses, online store owners, studios, developers, and even ordinary self-hosted projects on VPS all fall under the same logic.

It’s particularly striking how much the nature of ransomware has changed. Until recently, the general perception of this type of attack was fairly simple: they came, stole or encrypted files, left a note, and demanded a ransom. Mandiant describes a shift that looks far more ominous. Ransomware operators are increasingly targeting the ability to recover. Their goal is no longer just to steal data; now they are targeting backup infrastructure, identity services, and virtualization management planes. This is a very telling point. The attacker understands that recovery is the victim’s last chance to avoid paying the ransom. Therefore, it is the recovery process itself that must be disrupted. Not the archive itself, but the organization’s very ability to get back on its feet after an incident.

The report explicitly advises keeping off-site and air-gapped copies of backup data and regularly testing recovery from an isolated environment. It sounds boring, but when applied to real life, this is one of the most useful insights in the entire document.

A backup that no one has ever deployed in a test is just a hope in the form of a file. When an attack hits the hosting, there’s no time for philosophy. You need to quickly spin up a VM, roll back the project, restore the database, recover the control panel, check consistency, and launch the service. That’s when it turns out that some of the archives are corrupted, the recovery script is outdated, the keys are missing, and the required snapshot is stored in the same segment where the attacker is already present. Given the critical nature of the issue, we even published a separate article on the importance of backups.

The virtualization layer is now also a high-priority area of interest, and this makes sense. After all, if an attacker gains control over the environment hosting the client’s virtual machines, they immediately reach a new level of impact and can now shut down, take snapshots, read, delete, interfere with recovery, and disrupt infrastructure connectivity in every possible way. For a dedicated server, the risk looks slightly different, but the essence is similar: the attack targets where control is concentrated, specifically the ipkvm interfaces. But for VPS customers, this is bad news also because the average virtual server user almost never sees how secure the upper layer is. They only see their own machine and their SSH, while the real scale of the risk may lie one level higher.

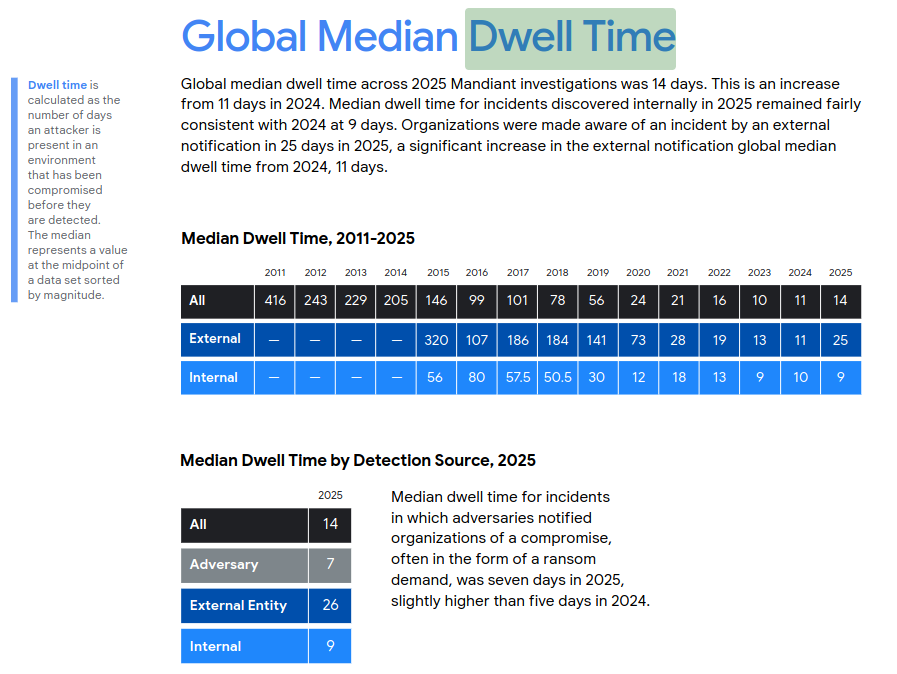

The dwell time statistics here are also striking. The global median time an attacker spends in the environment before detection has risen from 11 to 14 days. Internal detection takes about 9 days. External notification takes nearly 25 days. For a web server owner, this figure is almost tangible. Two weeks is a long time, and during that period, an attacker can download the customer database, plant a web shell, establish a foothold using legitimate tools, prepare for lateral movement, collect tokens, read configuration files, disable security, access backups, and only then move to the active phase. This is especially true if the attacker operates quietly and uses standard system functions. Mandiant notes separately that financially motivated groups and espionage groups actively exploit native capabilities of on-premises and cloud environments, as well as legitimate tools. This leads to the very unpleasant realization that signature-based malware protection has long since ceased to provide the level of security that many still rely on. If an attacker operates via PowerShell, PsExec, RDP, SMB, rclone, network scanners, built-in OS utilities, and legitimate RMM tools, their behavior becomes much harder to distinguish from routine administrative work.

Source: Mandiant M-Trends 2026

Interestingly, email phishing hasn’t disappeared; it’s simply no longer the main player. Its share has dropped, but it’s still used to expand an already ongoing compromise. For web projects and hosting clients, there’s a secondary risk here that’s often overlooked, since email and server compromises reinforce each other very effectively.

Through email, attackers can steal credentials and expand their reach; through the server, they can deploy fake pages, phishing forms, redirects, hidden APIs, and malicious attachments. And then the client’s infrastructure becomes part of someone else’s campaign.

Artificial intelligence also makes a brief appearance in the report, but without any exaggerated hype. Mandiant doesn’t claim that 2025 was the year when AI started wreaking havoc on its own. The picture is much calmer - and therefore more plausible. Yes, AI is used, but as an amplifier, as a tool for initial reconnaissance, for social engineering, and also to accelerate specific stages of an attack. But this does not negate the main point: the number of successful intrusions continues to grow due to human errors and system vulnerabilities.

Here’s an interesting point for customers of virtual and dedicated servers. Previously, the security issue often looked like a dispute over the division of responsibility - where the provider’s area of responsibility ends and the customer’s begins. Formally, this is a useful framework, but in practice, an attack easily crosses both sides. The provider may maintain the network and hypervisor perfectly, while the client leaves an outdated CMS, unnecessary sudo privileges, and a public bucket containing data dumps. The client may manage their project impeccably, while the provider overlooks a weak administrative panel or access to backup infrastructure. And suddenly, the whole debate about whose responsibility it is becomes rather pointless, because the damage is already shared: the server is down, data has leaked, email isn’t working, and the website is inaccessible. Then the recovery process begins - and as I mentioned above, this is precisely where attacks are increasingly targeting.

If you look at all this through the eyes of the owner of a typical VPS project, you can draw some pretty obvious conclusions. The server is now at risk not only because port 22 is open or because it runs WordPress. It is at risk because it exists within a complex network of connections, where Git and CI/CD, domain-based email, CDNs, and backups are woven into a intricate web, along with the administrator’s laptop, Telegram, Slack, and much, much more. Any of these points can become an entry point. Sometimes an attacker won’t even be particularly interested in the server itself until they find a convenient way to get there via another asset.

And here, we are forced to draw the main unpleasant conclusion for the entire industry. Hosting used to be associated exclusively with server (website) hosting and everything related to it - i.e., stability, uptime, fast connectivity, and transparent pricing. Now, however, it is increasingly associated with whether the client will survive an external attack.

This is already a different market, even if many continue to speak and think within an outdated paradigm. It’s not enough for a client to know how many cores their VM has. They increasingly want to understand the extent to which their provider can withstand the maximum number of crisis situations.

The Mandiant report is good because it doesn’t resort to scare tactics for the sake of it; it’s quite sober. And I would summarize its main point as follows: attacks have moved closer to the infrastructure, closer to day-to-day administrative work, and closer to human procedures. That is why hosting is now under greater pressure. Not because “everyone suddenly started attacking servers” - they were frequently attacked before as well. The pressure has increased because today a server is part of a large interconnected system, and attackers have learned to operate within this system quickly, using specific roles, and with the aim of making recovery impossible for the victim.

For owners of websites, web servers, VPS, and dedicated servers, a very simple conclusion follows from this. It is no longer enough to check just the project; you must check the entire ecosystem around it, because attacks have changed. They have become more immediate, and hosting, as it turns out, has long ceased to be merely a backdrop for business - it is one of its most critical components, and choosing it requires careful consideration.